Every AI coding tool ships with some version of the same pitch: tell the model what you want, and it writes the code. The pitch is mostly true. The code is mostly correct. But "correct" and "yours" are different things.

Over the past two years, the ecosystem has developed three distinct layers for guiding AI code generation: rules, skills, and now, taste. Each solves a different problem. Each operates at a different level of abstraction. Understanding the relationship between them is key to understanding where AI-assisted development is headed.

The Three Layers

Think of these as a stack. Rules form the foundation. Skills sit above them. Taste sits above everything.

Taste

Continuously learned from your behavior. Auto-managed. Compounds over time. Personal.

Skills

Reusable workflows and domain capabilities. Tutorials for agents. Triggered when needed.

Rules

Static, high-level constraints. Always present. Written once, rarely updated.

Each layer adds something the one below it cannot. Rules tell the agent what to avoid. Skills tell the agent what to do. Taste tells the agent how you do it.

Rules: The Static Foundation

Rules are the simplest layer. They are high-level, persistent constraints that are always active during code generation. If you have used .cursorrules, CLAUDE.md, or AGENTS.md, you have written rules.

Rules are good at encoding broad, stable preferences: "Use TypeScript," "Prefer functional components," "No default exports." They work best when the guidance is categorical and unlikely to change.

The problem is that rules are static. They are a snapshot of what you remembered to write down at the time you wrote them. Your codebase evolves. Your architecture changes. Your opinions shift. The rules do not update themselves.

Rules are like code comments written on the first pass. You refactored three times since. The comments still describe version one.

Too few rules and the model ignores your style. Too many and they contradict each other. There is a narrow band where rules are useful, and developers consistently drift out of it because maintenance is manual and there is no feedback loop to tell you when a rule has gone stale.

Rules serve a purpose. They are the right tool for stable, high-level constraints that apply everywhere. But they are not learning. They are configuration.

Skills: Graduated Workflows for Agents

Skills are a step above rules. Where rules are passive constraints, skills are active capabilities: reusable, scoped workflows that an agent can invoke when needed. Think of them as tutorials for AI agents.

A skill might encode how to scaffold a REST API endpoint, how to write a migration, how to set up a testing harness, or how to structure a React component with proper error boundaries. Skills are triggered by context. They are not always on. They activate when the task matches.

Skills meaningfully expand what an agent can do. They give it domain knowledge, multi-step procedures, and best practices that go beyond what raw model weights contain. They are particularly valuable for complex, repeatable workflows where getting the sequence right matters.

But skills have a limitation that mirrors the one rules have: they are authored and frozen. Someone wrote the skill. Someone decided the steps. Two developers using the same "Generate REST API endpoint" skill get the same output, regardless of whether one prefers named exports and typed error classes while the other co-locates schemas with handlers and uses structured error objects.

Skills encode what to do. They have no idea how you would do it. They increase an agent's capability without increasing its alignment to any particular developer.

Taste: The Continuous Learning Layer

Taste is the layer that learns. It sits above rules and skills because it conditions everything beneath it.

Where rules are written and skills are authored, taste is observed. It is not a file you maintain. It is a model of your preferences that builds itself from your behavior across sessions, learning your project context, your debugging patterns, your preferred approaches, and recalling them later without you having to write anything down.

Every interaction with the agent generates signal. An accept means the pattern was right. A reject means it was wrong. An edit reveals the delta between what was generated and what you actually wanted. A prompt reveals how you frame problems and what you consider important. These signals are not discarded. They are encoded into constraints that condition future generation.

What Makes Taste Different

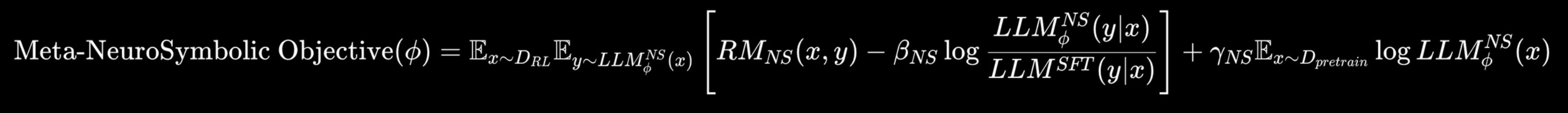

Taste is powered by taste-1, a meta neuro-symbolic AI model that combines the generative capabilities of large language models with a symbolic constraint system that captures and enforces your individual patterns. The architecture separates what the model knows (neural) from what it has learned about you (symbolic). The symbolic layer is lightweight, updates in real time, and provides interpretable reasoning paths.

Standard LLM generation samples from the model's learned distribution, shaped by internet-scale training data. Conditioned generation with taste-1 samples from a distribution shaped by your specific constraints:

1// Standard generation

2output = LLM(prompt)

3

4// Conditioned generation with taste

5output = LLM(prompt | taste(user))The continuously learning side learns the texture of your code from both explicit and implicit feedback. The meta neuro-symbolic model enforces the invisible logic of your choices. A reflective context engineering layer creates a self-aware RL feedback loop that refines its understanding with every session.

Auto-Managed, Not Hand-Written

The critical distinction is that taste is auto-managed. The system creates and maintains its own files, folders, and structure. As your project grows, Command Code splits your taste into multiple taste packages: how you build APIs, how you write frontend components, how you wire the backend. It creates this taxonomy on its own and keeps it current.

The learned preferences are stored transparently in human-readable taste.md files within your project directory. You can inspect, edit, or reset them. But you never have to write them in the first place.

1## TypeScript

2- Use strict mode. Confidence: 0.80

3- Prefer explicit return types on exported functions. Confidence: 0.65

4- Use type imports for type-only imports. Confidence: 0.90

5

6## Exports

7- Use named exports. Confidence: 0.85

8- Group related exports in barrel files. Confidence: 0.70

9- Avoid default exports except for page components. Confidence: 0.85

10

11## Error Handling

12- Use typed error classes. Confidence: 0.85

13- Always include error codes. Confidence: 0.90

14- Log to stderr, not stdout. Confidence: 0.75This is learned, not written. Every confidence score reflects how strongly the system has observed the pattern in your behavior. High-confidence rules emerged from consistent actions across many sessions. Lower-confidence rules are still forming. The system is honest about what it knows.

The Learning Loop

The system operates on a continuous loop that runs on every interaction. There is no batch training, no scheduled update window. The agent adapts as you work.

This is what makes taste compound. The more you use it, the better the constraints. The better the constraints, the fewer corrections you make. The fewer corrections, the faster you ship. The correction loop that defines most AI coding experiences gradually disappears.

The Full Comparison

| Rules | Skills | Taste | |

|---|---|---|---|

| Source | You write them | You author them | Learned from you |

| Presence | Always on | Triggered when needed | Always on, always evolving |

| Granularity | Broad guidelines | Workflow-level | Micro-decisions |

| Updates | When you remember | When you maintain | Every session, automatically |

| Over Time | Decays and drifts | Rots silently | Compounds accuracy |

| Output | Same for everyone | Same for everyone | Personal to you |

| Maintenance | Manual | Manual | Auto-managed |

| What It Adds | Constraints | Capability | Alignment |

These layers are not in competition. They are complementary. Rules provide the guardrails. Skills provide the knowledge. Taste provides the alignment. A well-configured coding agent uses all three. But the layer that matters most in the long run is the one that learns.

Sharing and Composing Taste

Individual learning is useful. Team learning is more powerful. Taste profiles are portable and composable. Senior engineers can encode their patterns. Teams can share conventions without maintaining static documentation. Open source maintainers can publish project-specific taste that contributors automatically adopt.

1# Push your project taste to the registry

2npx taste push --all

3

4# Pull someone's CLI taste into your project

5npx taste pull ahmadawais/cliTaste profiles are available in your profile on Command Code Studio, where you can inspect, compare, and compose them across projects.

Results

We measured correction loops across common coding tasks. The pattern is consistent: taste compounds.

| Task Type | Without Taste | Week 1 | Month 1 |

|---|---|---|---|

| CLI scaffolding | 4.2 edits | 1.8 edits | 0.4 edits |

| API endpoint | 3.1 edits | 1.2 edits | 0.3 edits |

| React component | 3.8 edits | 1.5 edits | 0.5 edits |

| Test file | 2.9 edits | 0.9 edits | 0.2 edits |

10x faster coding. 2x faster code reviews. 5x fewer bugs. These are the numbers after one month of continuous learning.

The Future of Coding Is Personal

The trajectory of AI coding tools has been toward more power: better models, longer context, faster generation. That is necessary but not sufficient. Power without alignment produces code that is impressive and impersonal. It is someone else's code, generated faster.

The missing layer has always been personalization that compounds. Not the kind you configure once and forget, but the kind that watches how you work, learns from what you accept and reject, and gradually converges on an understanding of how you think about code.

Rules set the boundaries. Skills provide the knowledge. Taste makes it yours.

Read the full Taste documentation.